Fairness in AI Training Models: A Crucial Step Towards Building Trustworthy and Equitable Systems

As Artificial Intelligence (AI) continues to transform industries and revolutionize the way we live and work, concerns about fairness and bias in AI training models have become increasingly pressing. Fairness in AI training models is a critical aspect of building trustworthy and equitable systems that do not perpetuate or amplify existing social and economic inequalities.

The Need for Fairness in AI Training Models

AI systems are only as good as the data they are trained on, and if this data is biased, the models will be too. Fairness in AI training models ensures that these systems do not discriminate against specific groups or individuals based on characteristics such as age, race, gender, or socioeconomic status. It is essential to recognize that fairness in AI systems is not just about avoiding harm, but also about promoting just and equitable outcomes.

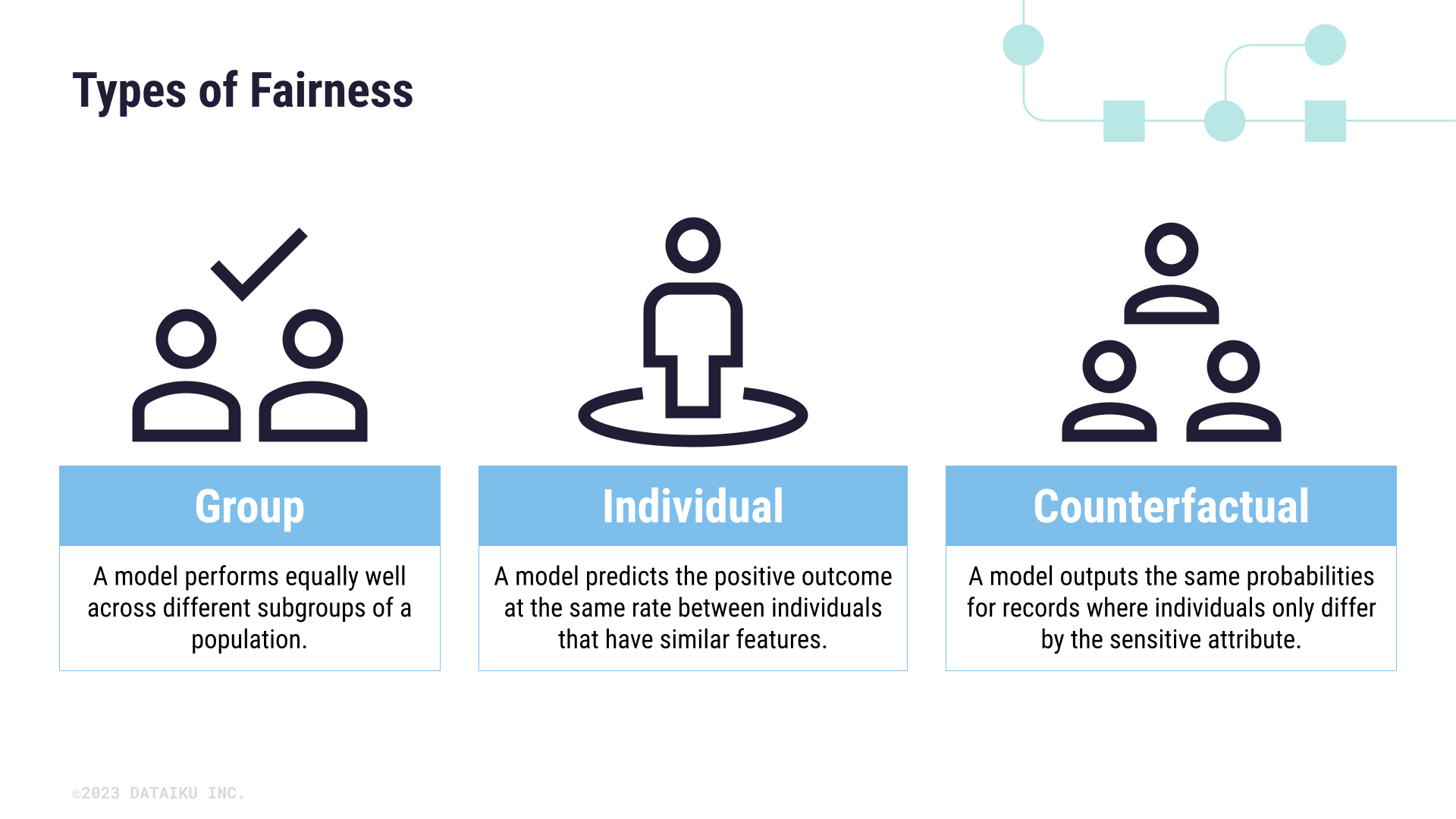

Understanding Fairness in AI Training Models

Fairness in AI training models involves several key concepts, including individual fairness, group fairness, and counterfactual fairness. Individual fairness ensures that similar individuals are treated similarly, while group fairness ensures that different groups are treated fairly. Counterfactual fairness, on the other hand, examines what would have happened if a different decision had been made.

- Individual Fairness: This concept ensures that similar individuals are treated similarly. For example, in a loan approval system, individual fairness would ensure that people with similar credit scores are treated equally.

- Group Fairness: This concept ensures that different groups are treated fairly. For example, in a hiring system, group fairness would ensure that hiring decisions are not based on a person's race or gender.

- Counterfactual Fairness: This concept examines what would have happened if a different decision had been made. For instance, in a healthcare system, counterfactual fairness would help determine if a patient with a similar condition would have received a different treatment.

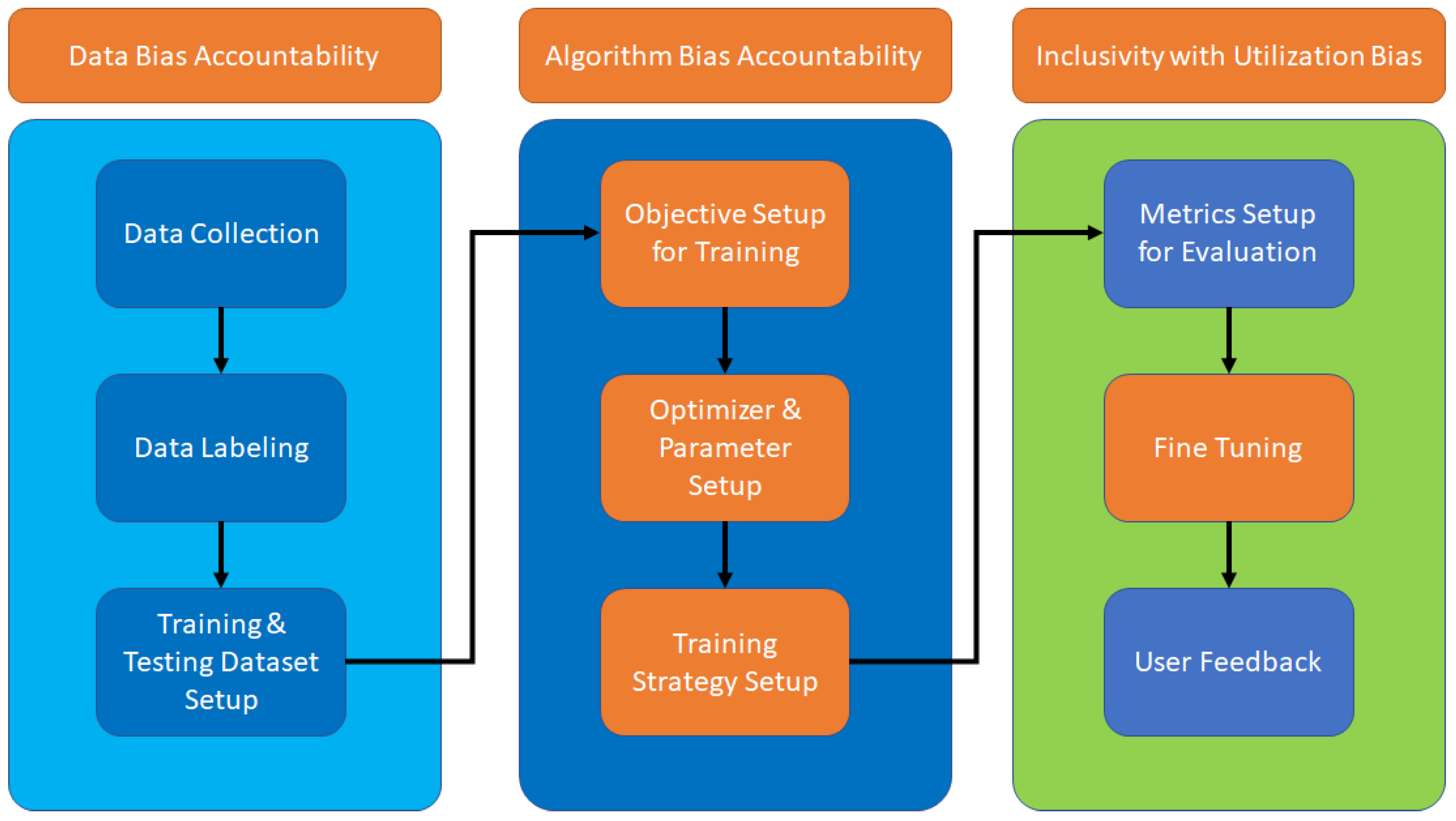

Addressing Bias in AI Training Models

Bias in AI training models can arise from various sources, including data, algorithms, and human decision-making. To address bias, AI developers and researchers use various techniques, including data preprocessing, algorithmic fairness techniques, and post-processing methods. Some of the techniques used to address bias include:

- Data Preprocessing: This involves removing or mitigating bias from the data used to train the model.

- Algorithmic Fairness Techniques: This involves using techniques such as fairness-aware algorithms and inverse propensity scoring to address bias.

- Post-processing Methods: This involves making adjustments to the model outputs to ensure fairness.

Fairlearn: A Python Library for Fairness in AI Training Models

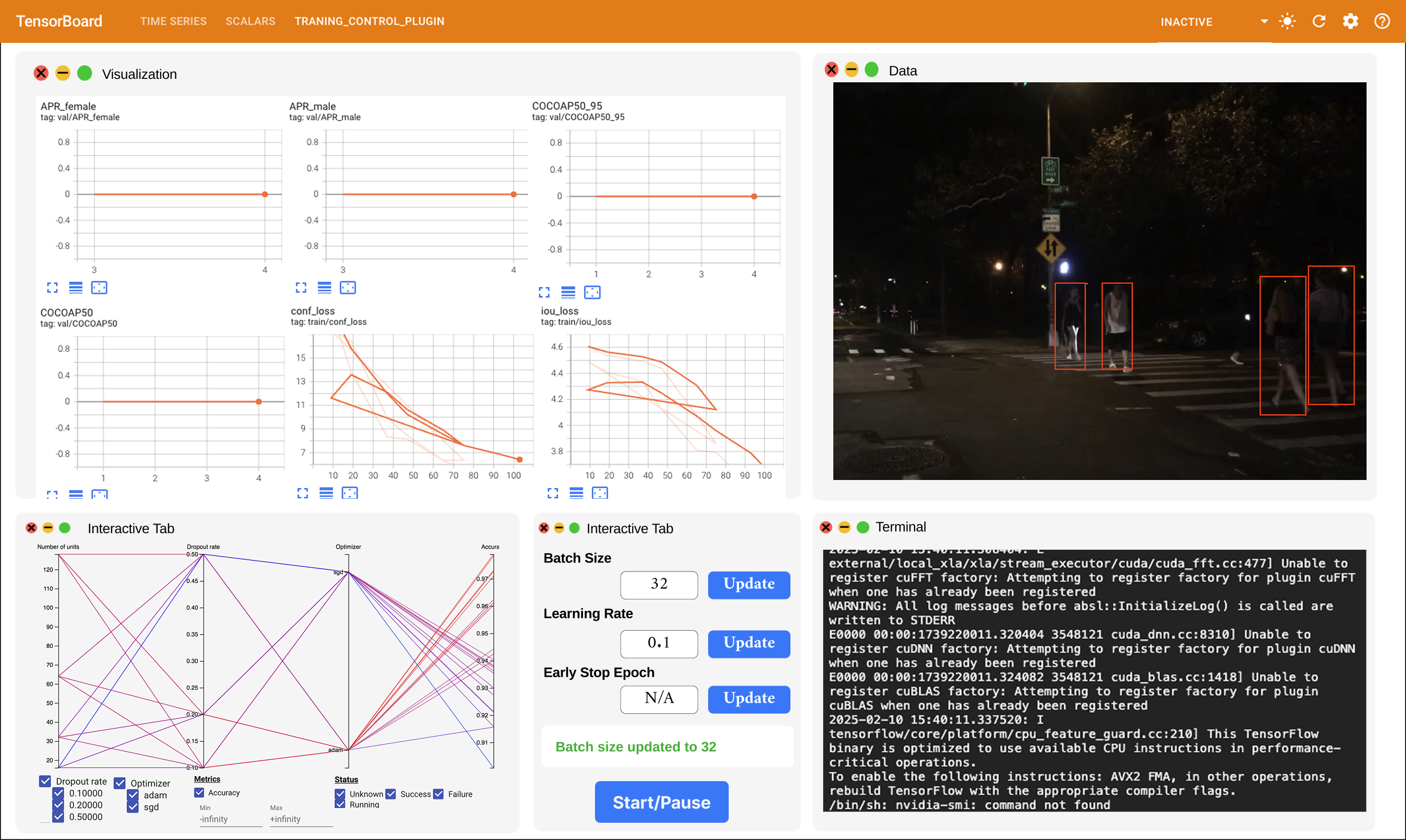

Fairlearn is a Python library that helps data scientists and developers build machine learning models that are fair and responsible. It provides a suite of tools and techniques for detecting and mitigating bias in AI systems. Fairlearn has two components: an interactive visualization dashboard and unfairness mitigation algorithms. These components are designed to help navigate the trade-offs between fairness and model performance.

Conclusion

Fairness in AI training models is a complex and multifaceted issue that requires a comprehensive approach. By understanding the various fairness concepts and addressing bias in AI training models, developers and researchers can build trustworthy and equitable systems that promote just and fair outcomes. As AI continues to transform industries and societies, building fairness in AI training models is essential for ensuring that these systems do not perpetuate or amplify existing social and economic inequalities.

References

References: * Fairlearn: A Python Library for Fairness in AI Training Models * Understanding and Correcting Algorithmic Bias in Artificial Intelligence * Fairness in AI Models: Ensures Equitable and Unbiased Outcomes * Bias and Fairness in AI Models * Exploring AI Fairness Technologies: Reduce Bias in Data and Decisions Through Ethical Design, Transparency, and Inclusive Datasets * Fairness in AI Training Models: A Crucial Step Towards Building Trustworthy and Equitable Systems